Amazon Bedrock AgentCore: The Infrastructure Layer Your AI Agents Have Been Missing

Building an AI agent that works in a demo is one thing. Getting it to reliably work in production — across thousands of concurrent users, with proper security, memory, and observability — is an entirely different challenge. If you’ve ever tried to take an AI agent from prototype to production, you know exactly what I’m talking about. Months of undifferentiated infrastructure work: session management, identity controls, persistent memory, tool integrations, monitoring. All of it built from scratch, all of it before you’ve written a single line of your actual business logic.

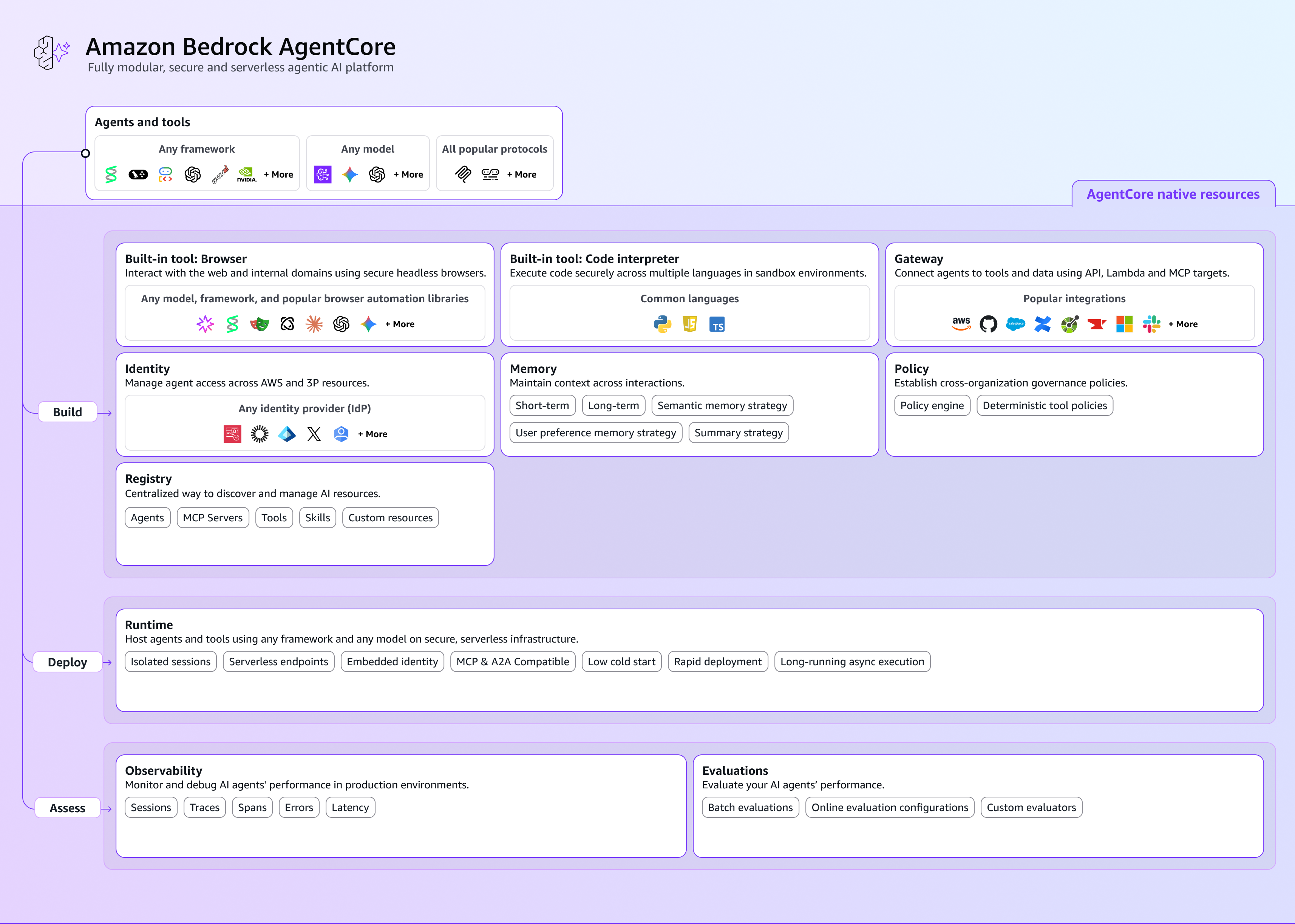

Amazon Bedrock AgentCore is AWS’s answer to that problem. Released to general availability in October 2025, it’s a modular, enterprise-grade platform designed to handle the operational complexity of running AI agents at scale — so your teams can focus on what actually differentiates your business.

What Is AgentCore?

At its core, AgentCore is a collection of managed services that form the production infrastructure layer for AI agents. Think of it the way you think about a cloud platform itself — you wouldn’t hand-roll your own data centers, your own DNS, or your own IAM system. AgentCore takes that same philosophy and applies it to the agentic AI stack.

What makes it particularly practical for large organizations is that it’s both framework-agnostic and model-agnostic. Whether your teams are building with LangGraph, CrewAI, LlamaIndex, or the OpenAI Agents SDK, and whether they’re running Claude, GPT-4, Amazon Nova, or Llama — AgentCore works with all of it. You don’t have to standardize your entire engineering org on a single stack to benefit from a shared infrastructure layer.

The components are also fully modular. You can adopt individual services independently or use them together as an integrated platform. Let’s walk through the core building blocks.

The Core Features

Runtime: Secure Execution at Scale

The AgentCore Runtime is the execution engine. Every agent session runs in its own dedicated microVM — an isolated environment with its own CPU, memory, and filesystem. When a session ends, everything is wiped. This means cross-session data leakage is prevented by design, not by policy.

The runtime handles auto-scaling from zero to thousands of concurrent sessions and supports workloads ranging from low-latency real-time conversations to 8-hour asynchronous tasks — covering the full spectrum from customer-facing chatbots to complex back-office workflows. For teams building voice applications, bidirectional streaming support enables agents that can simultaneously listen and respond, handle interruptions, and adapt mid-conversation.

Memory: Agents That Actually Learn

One of the most frustrating limitations of early AI agents was their amnesia. Every conversation started from scratch. AgentCore Memory solves this with a layered approach:

- Session memory maintains conversation context within an active session, enabling coherent multi-turn dialogue

- Long-term memory extracts and stores facts, preferences, and summaries across sessions — agents can recognize returning users and adapt accordingly

- Episodic memory allows agents to learn from past interactions, building toward more human-like adaptive behavior over time

For multi-agent systems, memory branching lets multiple specialized agents share a session while maintaining their own isolated context — similar to how Git branches work. A travel coordinator agent can simultaneously delegate to a flight agent and a hotel agent, each maintaining their own memory within the shared session.

Gateway: Solving the Integration Problem

Connecting agents to enterprise tools at scale creates a mathematical problem: with M agents and N tools, you’re looking at M×N potential integrations, each requiring custom code. AgentCore Gateway collapses that complexity by acting as a centralized MCP (Model Context Protocol) tool server.

The practical implication is significant: your existing REST APIs, Lambda functions, and OpenAPI specs can be exposed as agent-ready tools with zero infrastructure rewrites. Gateway handles protocol translation, throttling, payload transformation, and both inbound (OAuth, IAM) and outbound (API keys, OAuth 2.0) security — all managed for you. Out of the box, it integrates with Salesforce, Slack, Jira, GitHub, Zoom, and Databricks, among others.

Agents can also perform semantic tool discovery across hundreds of available tools, reducing the time spent on tool selection by up to 3x compared to exhaustive search approaches.

Identity: Zero-Trust for Autonomous Agents

When an agent acts on behalf of a user — accessing their calendar, reading their email, submitting a form in their name — the security model has to account for that delegation chain. AgentCore Identity manages the full authentication and authorization lifecycle with a zero-trust approach.

It handles both directions:

- Inbound: validates every user or system accessing your agent via AWS IAM or OAuth

- Outbound: enables agents to access external services on behalf of users via secure OAuth 2.0 flows, storing tokens in an encrypted credential vault

This works with the identity providers most enterprises already use — Amazon Cognito, Okta, Microsoft Azure Entra ID, and Auth0 — without requiring user migration. Agents can access Salesforce, Slack, GitHub, and similar services securely without hardcoded credentials anywhere in your codebase.

Code Interpreter and Browser: Expanding What Agents Can Do

Two capabilities dramatically expand the surface area of tasks agents can tackle:

AgentCore Code Interpreter gives agents the ability to write and execute code dynamically in isolated sandbox environments. Supporting Python, JavaScript, and TypeScript with up to 8-hour execution windows, it’s particularly valuable for analytical tasks — financial modeling, data transformation, statistical analysis — where an agent needs to go beyond language generation into actual computation.

AgentCore Browser provides a serverless, cloud-based browser runtime so agents can interact with web applications: navigate pages, fill forms, extract data, click elements. Every session is fully isolated, and all actions are logged to CloudTrail with session replay available for audit purposes. No browser fleet to manage, no scaling headaches.

Observability, Policy, and Evaluations: Running Agents You Can Trust

Three services address what is arguably the hardest part of production AI: knowing what your agents are doing, enforcing boundaries around what they’re allowed to do, and continuously validating that they’re doing it well.

AgentCore Observability automatically emits OpenTelemetry traces capturing every model invocation, tool call, and reasoning step. Built-in CloudWatch dashboards track token usage, latency, session duration, and error rates. Integrations with Datadog, Dynatrace, LangSmith, and Langfuse bring this data into whatever monitoring stack your team already uses.

AgentCore Policy lets compliance, security, and development teams author guardrails in natural language — AWS automatically converts them to Cedar policies that intercept tool calls in real time before execution. An agent can be blocked from accessing production databases, restricted from sending external emails, or limited to read-only operations — all without custom middleware.

AgentCore Evaluations runs continuous quality assessment against production traffic using 13 built-in evaluators covering helpfulness, tool selection accuracy, output reliability, and more. It supports LLM-as-Judge scoring for custom quality dimensions, enabling A/B testing on major updates and automated drift detection with alerts.

Practical Applications

The capabilities above become concrete when you look at what organizations are actually building.

Healthcare: Accelerating Prior Authorization Cohere Health built their Review Resolve™ platform on AgentCore for medical necessity reviews — a workflow that routinely spans multiple hours and requires strict audit trails, data isolation, and regulatory compliance. AgentCore’s extended session support and CloudTrail integration were prerequisites, not nice-to-haves. The expected outcome is a 30–40% reduction in review turnaround times, directly impacting patient care timelines.

Marketing: Campaign Automation at Scale Epsilon (Publicis Groupe) built an Intelligent Campaign Automation platform using AgentCore. The result: 30% reduction in campaign setup time, 20% increase in personalization effectiveness, and 8 hours saved per marketing team per week. That’s time shifted from configuration work back to strategy.

Telecom R&D: Knowledge at Scale Ericsson deployed agents across complex 3G/4G/5G/6G systems spanning millions of lines of code and thousands of interconnected subsystems. AgentCore enables their R&D workforce to surface knowledge across this complexity at a scale that wasn’t previously feasible — they’ve reported double-digit productivity gains across tens of thousands of employees.

ML Operations: Compressing Time-to-Insight Amazon Devices used AgentCore to power specialized agents for ML model fine-tuning. A task that previously required days of engineering time — object detection model fine-tuning — was reduced to under one hour. At the pace of ML iteration, that’s a qualitative change in how fast teams can experiment and ship.

The Organizational Benefits

Beyond individual features, a few themes emerge for organizations evaluating AgentCore:

Speed to production. The infrastructure that used to take months to build — session management, identity, memory, observability — is available on day one. Teams spend that time on differentiated business logic instead.

Governance without gatekeeping. Policy and Evaluations together give security and compliance teams real controls over agentic systems without requiring them to review and approve every code change. Rules authored in natural language, enforced deterministically in real time.

Multi-team flexibility. Because AgentCore is framework- and model-agnostic, a platform team can adopt it as shared infrastructure while individual product teams continue using their preferred agent frameworks. There’s no forced standardization.

Consumption-based economics. Serverless auto-scaling from zero means organizations pay for actual usage, not reserved capacity. This is especially important during the early phases of agent adoption when usage patterns are unpredictable.

Getting Started

AWS offers a generous free tier for AgentCore during initial exploration, and all services are available in the standard AWS regions. The AgentCore developer guide is a solid starting point, and the Managed Harness — a newer addition that lets you define an agent with just a model, system prompt, and tools, then handles the full execution loop automatically — is worth a close look if you want to reduce orchestration code to near zero.

The hardest part of any AI agent project isn’t the model. It’s everything around the model. AgentCore is a meaningful step toward making that surrounding infrastructure something you configure rather than something you build.